What Is Generative AI? A Plain-English Guide for 2026

By Sanso Uka

Generative AI is a category of artificial intelligence that can produce new content — text, images, audio, video, or code — based on patterns learned from large amounts of existing data. Unlike older AI systems that were built to classify, sort, or detect things, generative AI actually creates something new every time you interact with it. If you’ve ever typed a question and gotten a full written answer back, or described a scene and watched an image appear, you’ve already used it. The technology moved from research labs to everyday tools fast, and by 2026 it’s embedded in everything from search engines to photo editors.

💡 Save this guide for later — generative AI is evolving fast and this breakdown will stay useful.

How It Actually Works (Without the Jargon)

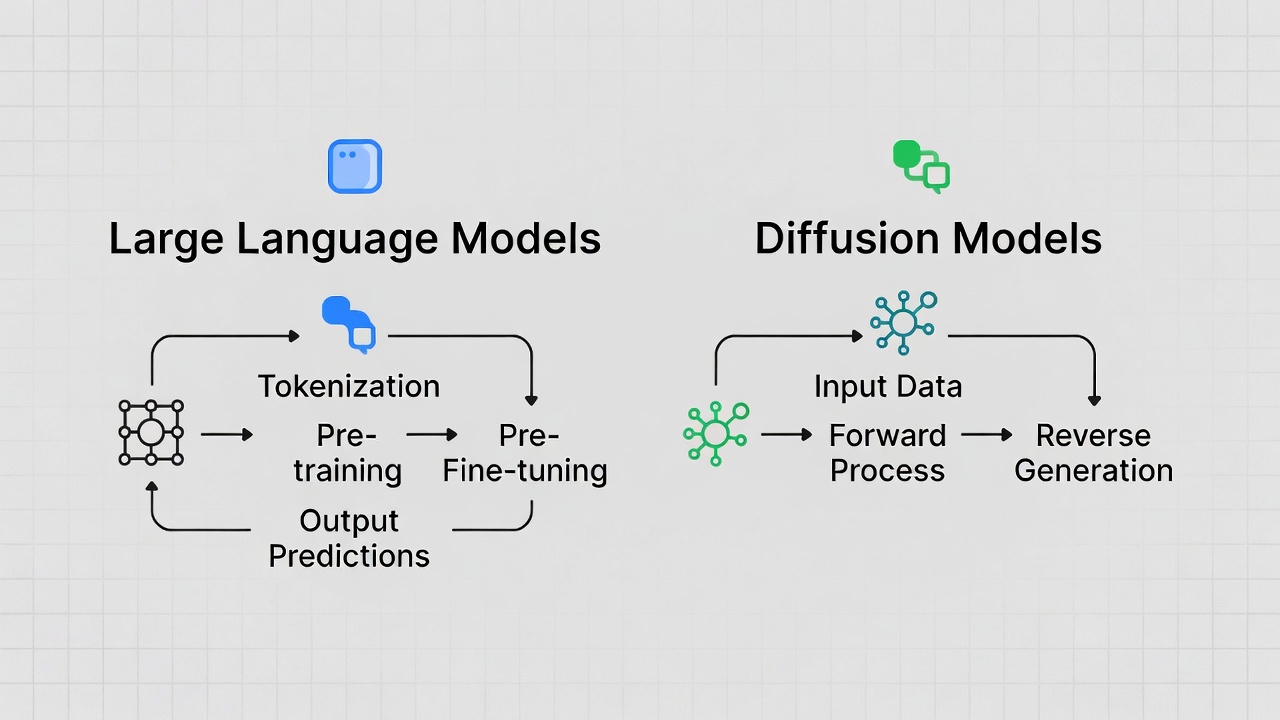

Most generative AI systems are built on one of two main architectures: large language models (LLMs) for text and code, and diffusion models for images and video. Both approaches share the same core idea — train the model on enormous datasets, then let it predict the most likely next output based on a given input.

A large language model, for example, processes billions of words from books, websites, and other text sources. It learns statistical relationships between words, phrases, and ideas. When you ask it a question, it doesn’t “look up” an answer — it generates one by predicting what a coherent, relevant response would look like, token by token.

Diffusion models work differently. They learn to remove “noise” from corrupted images until something coherent emerges. During training, images are progressively scrambled; the model learns to reverse that process. When you prompt it with “a cat sitting on a moon made of cheese,” it generates an image by iteratively refining random noise into something matching your description.

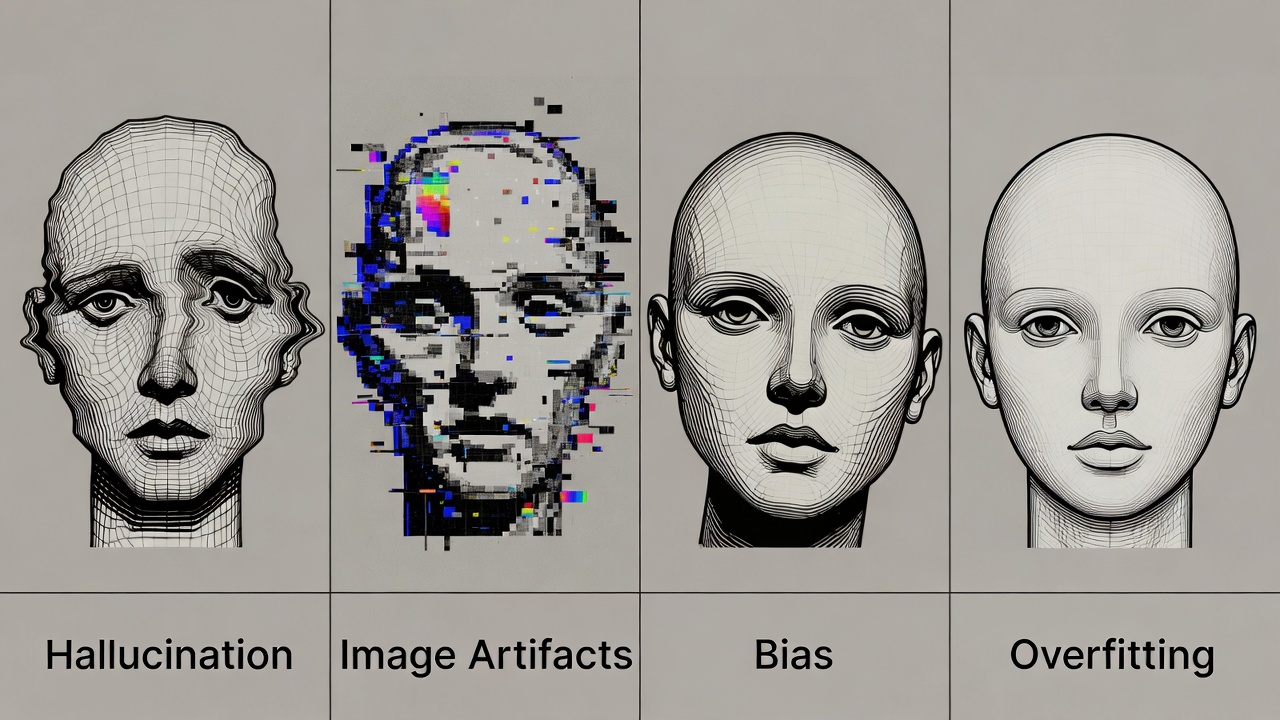

Neither approach “understands” anything the way a human does. They find patterns. That’s powerful — and it’s also the root of their most significant limitation.

What Generative AI Can Actually Do

The practical applications split across a few main categories:

- Text generation: Writing drafts, summarizing long documents, answering questions, translating between languages, generating code. Tools like Claude, GPT-4o, and Gemini 1.5 Pro operate in this space.

- Image generation: Creating visuals from text prompts. Midjourney, DALL·E 3, and Stable Diffusion are the main players. Output quality has reached a point where generated images are routinely mistaken for photos.

- Audio and music: ElevenLabs can clone a voice from a short sample and generate speech in that voice. Suno and Udio generate full songs — vocals included — from a text description.

- Video: Still the hardest category, but Sora and Runway Gen-3 can produce short clips that hold together reasonably well. Long-form coherent video remains a work in progress.

- Code assistance: GitHub Copilot, Cursor, and similar tools suggest code completions, explain functions, and debug errors. Developers report meaningful productivity gains, though the tools still produce bugs and require review.

For a broader look at how these tools are reshaping everyday software, check out our coverage of AI tools and chatbots and what they’re actually worth using in 2026.

Where It Falls Short

Generative AI has a well-documented problem called hallucination — it produces confident-sounding statements that are simply wrong. Ask a language model about a niche legal ruling, a specific medical study, or an obscure historical date, and there’s a real chance it will fabricate details. It doesn’t flag uncertainty reliably; it just keeps generating.

Image generators still struggle with hands (fingers remain a known weak point), accurate text rendered within images, and spatial consistency across frames in video. Audio models can produce unnervingly realistic voices, which creates obvious misuse risks.

On the technical side, these models are expensive to run. A single query to a frontier model costs fractions of a cent, but at scale those fractions add up to enormous infrastructure costs. That’s partly why most capable generative AI tools sit behind a subscription — expect to pay $10–$30/month for serious use of any leading platform.

There are also unresolved questions around copyright. Training data for many models includes copyrighted text and images scraped from the web without explicit permission. Several lawsuits are ongoing as of early 2026, and the legal landscape will likely shift. For a deep dive into where the research side of this is heading, the GPT-4 Technical Report on arXiv is worth reading if you want to understand what frontier models are actually capable of and where the limits lie.

Generative AI vs. Traditional AI — What’s the Difference?

Traditional AI systems were built to do one specific thing well: recognize a face, flag a fraudulent transaction, recommend a product. They’re trained on labeled data and optimized for narrow prediction tasks. They don’t create — they classify.

Generative AI is built for open-ended output. Instead of answering “is this image a cat: yes or no,” it answers “generate an image of a cat in the style of a 1970s science fiction paperback cover.” That flexibility is what makes it so broadly useful — and harder to contain or audit.

If you’re curious about where this is all going, we’ve got a breakdown of future AI trends worth bookmarking.

Should You Be Using It?

For most people, yes — with clear eyes about what it is. Generative AI is genuinely useful for drafting, brainstorming, summarizing, and prototyping. It saves real time on repetitive tasks. But it requires verification. Treat its outputs as a first draft, not a final answer. If you’re using it for anything factual — medical, legal, financial — double-check against primary sources.

If you’re a developer or someone working with data, tools like GitHub Copilot or Claude’s API can meaningfully accelerate your workflow. If you’re a writer or designer, image and text tools can handle rough drafts and reference sketches, freeing you to focus on judgment and refinement. You can also explore how machine learning underpins many of the tools you’re already using daily.

📌 Don’t forget to save this post — it covers the fundamentals that will help you evaluate any new generative AI tool that launches.

The Bottom Line

Generative AI is not magic and it’s not hype — it’s a practical category of tools that create new content by learning from existing data. It’s genuinely capable, genuinely useful, and genuinely limited. It hallucinates facts, struggles with certain media types, costs money at scale, and sits in unresolved legal territory around training data.

The best way to approach it is like any other powerful tool: learn what it does well, know where it fails, and keep a human in the loop for anything that matters. Start with a free tier on one of the major platforms — Claude, Gemini, or GPT-4o — give it a task you already know the answer to, and evaluate the output yourself. That hands-on test will tell you more than any benchmark.